November/December 2003

By Dr. James A. Larson

Recently, the W3C Multimodal Working Group published a first working draft of EMMA — the Extended MultiModal Annotation markup language — EMMA (www.w3.org/TR/emma/). EMMA’s intended use is to represent the semantics for information entered via various input modalities and the resulting integrated information.

Using EMMA

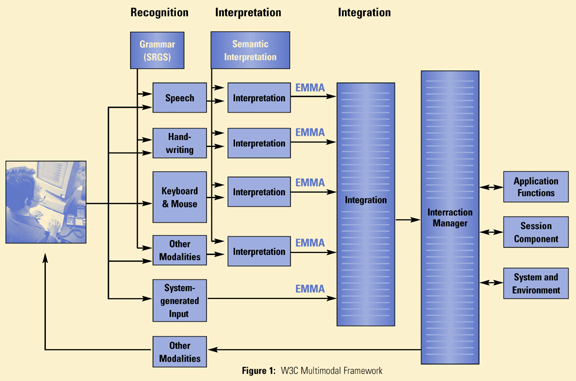

EMMA’s use is illustrated in Figure 1.

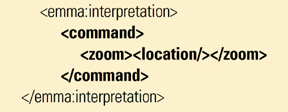

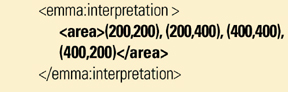

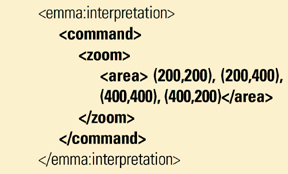

It shows a part of the W3C multimodal framework and how users can enter information using speech, ink, keyboard and mouse, and other modalities. Normally, EMMA is not written by humans. It is generated by software components for use by other software components. User information is recognized and interpreted by modality-specific components including speech recognizers, handwriting recognizers and keyboard and mouse device drivers. The information entered using each modality is represented with a common language — this is where EMMA comes in. Each of the modality-specific recognizers/interpreters convert the user supplied information into an EMMA representation. Examples of the EMMA representation include:

This EMMA representation would then be processed by a dialog manager that would respond to the user by zooming into the area contained by the series of points.

EMMA Descriptions

A typical EMMA description consists of three types of information that are useful to describe user-entered information:

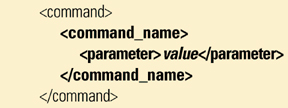

1. Data model — A schema to describe the names and structure of data entered by the user, such as:

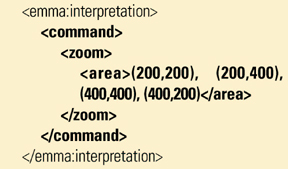

2. Instance data — The information entered by the user via various input modalities:

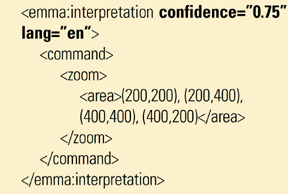

3. Meta data — The annotation of instance data. This includes information generated by speech and handwriting recognizers, integration processors, and other information that may be useful to backend information processors. For example, the confidence factor assigned by a recognizer and the natural language being used by the user:

EMMA Concepts

EMMA defines a number of concepts, including:

The EMMA language is still evolving. The Multimodal Working Group solicits your feedback about the above concepts and how they should be represented in the EMMA language. The Multimodal Working Group is evaluating whether annotations should be integrated tightly with instant data or separated from instant data. The language will likely evolve based on feedback from practitioners. However, with the publication of the first working drafts for EMMA and InkML, the Ink Markup Language (www.w3.org/TR/InkML/), the W3C Multimodal Working Group has taken an important step in the creation of languages enabling multimodal applications.

If EMMA is widely adopted, then components from different vendors will be able to interoperate. For example, a speech recognizer from Vendor A, a handwriting recognizer from Vendor B, an integration component from Vendor C, and an interaction manager from Vendor D will be able to transfer EMMA statements among themselves. EMMA will be come the inter lingua among components of multimodal systems. This will enable developers to create multimodal platforms by choosing the “best of breed” or “least expensive” for each component type.

EMMA will become an important language for integrating user input entered via different modalities. By representing information in a common format, information from different devices can be integrated for processing into a single representation for processing by dialog managers, inference engines, or other advanced information processing components.